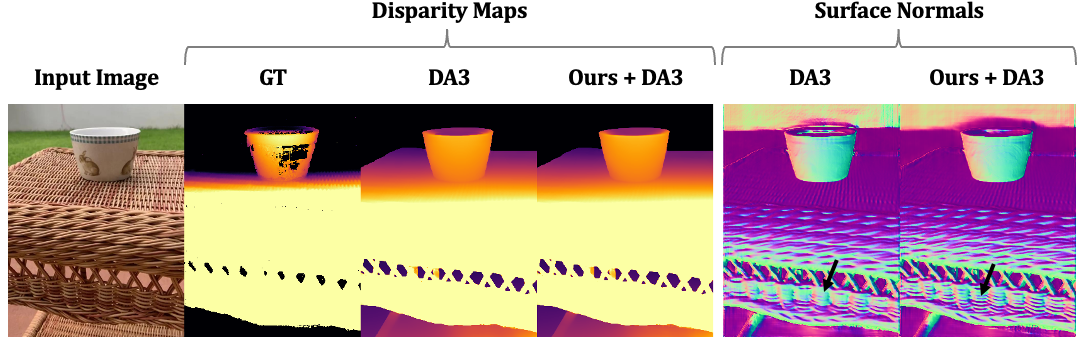

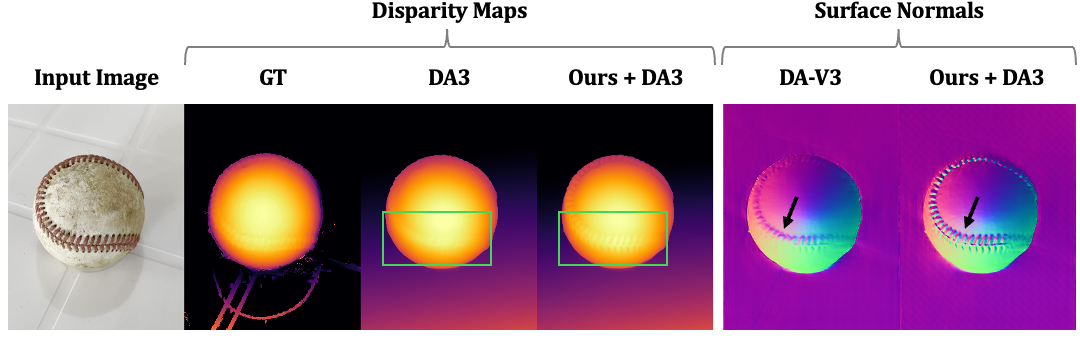

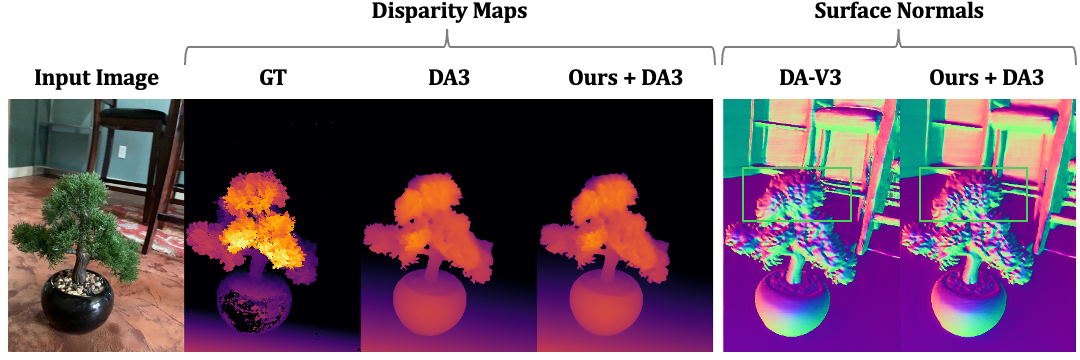

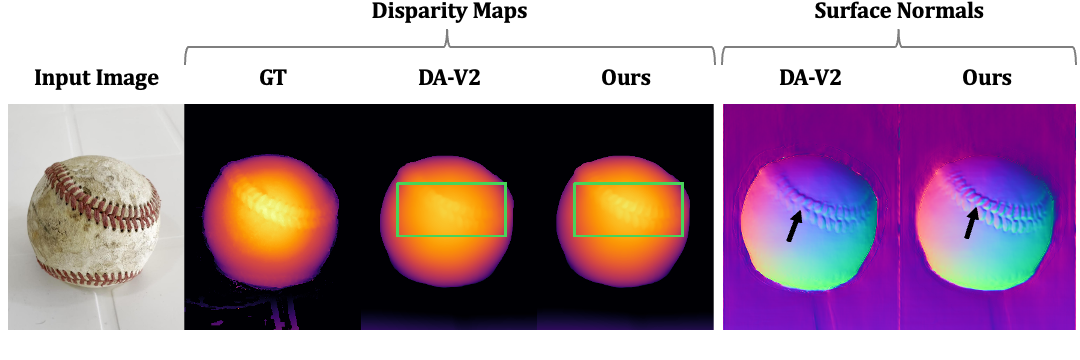

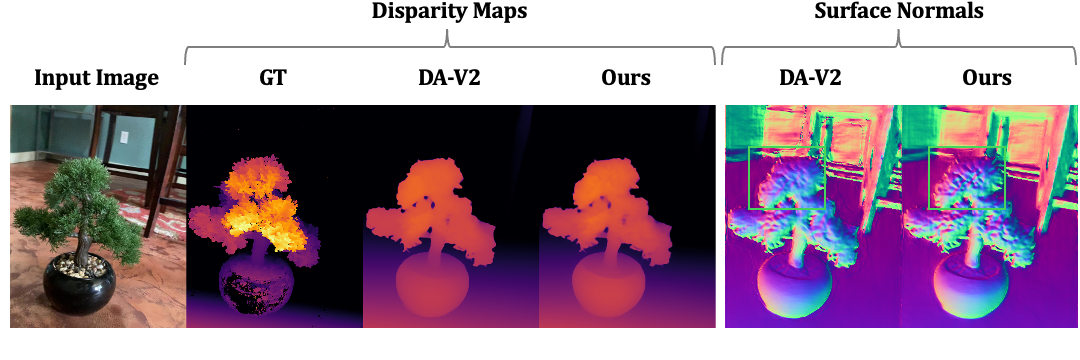

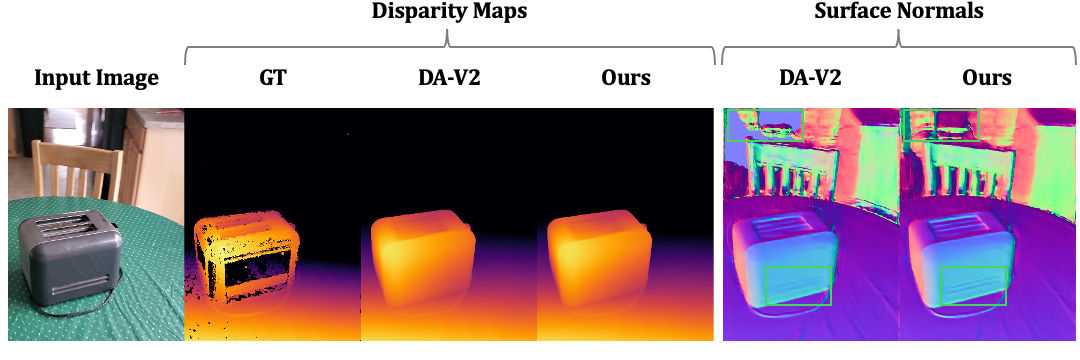

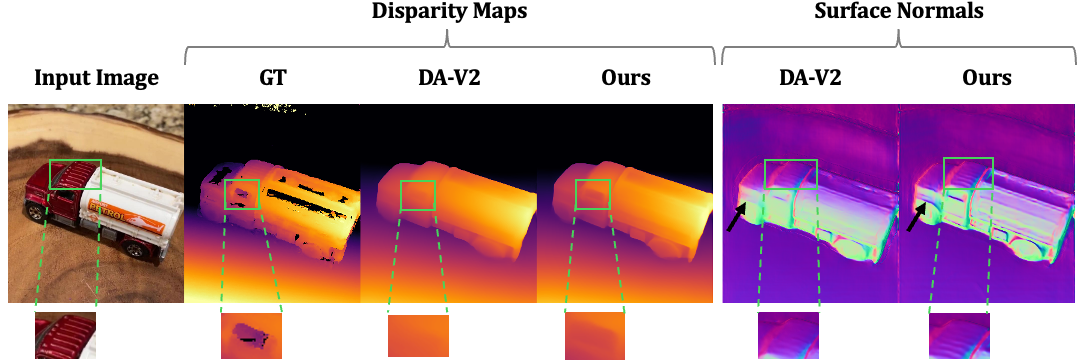

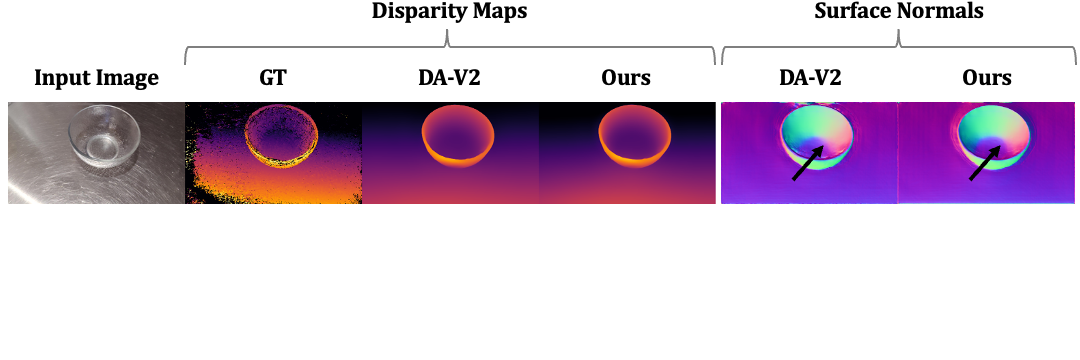

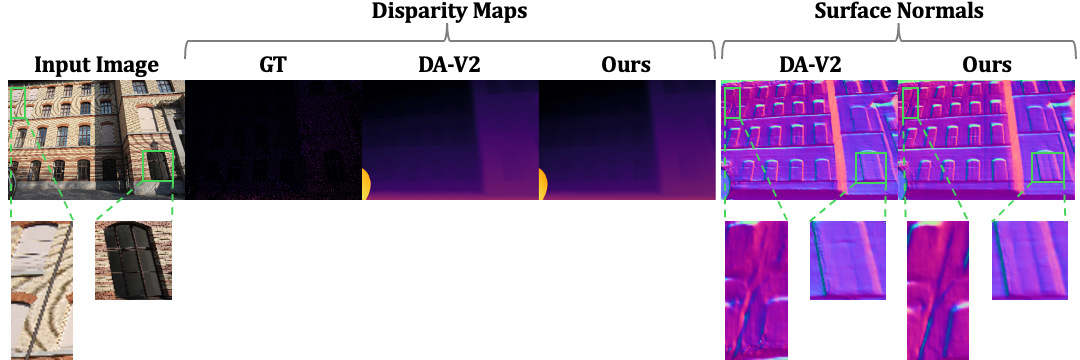

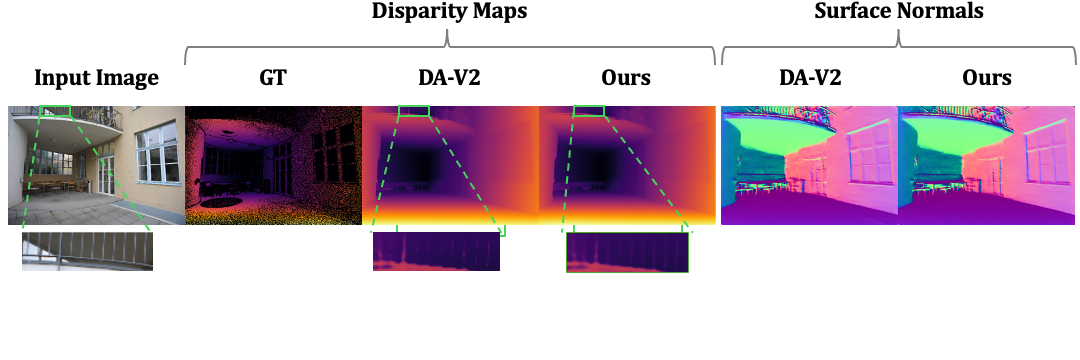

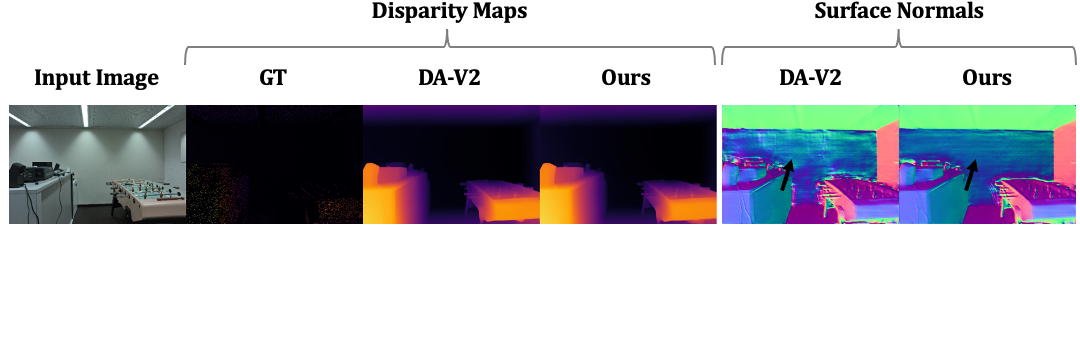

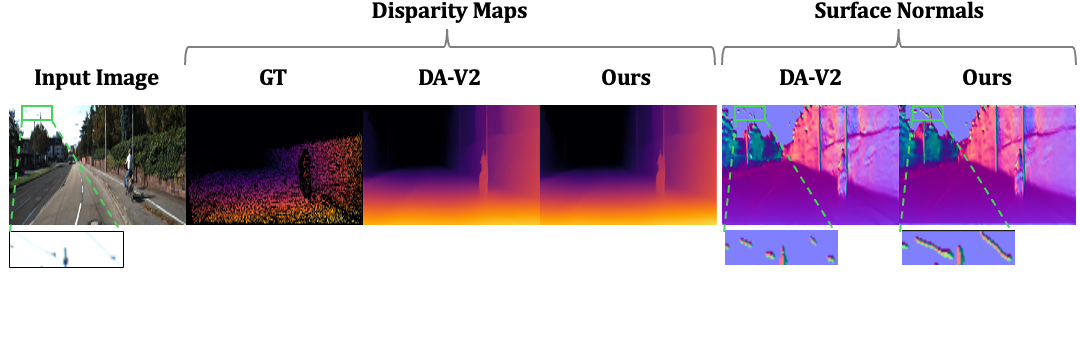

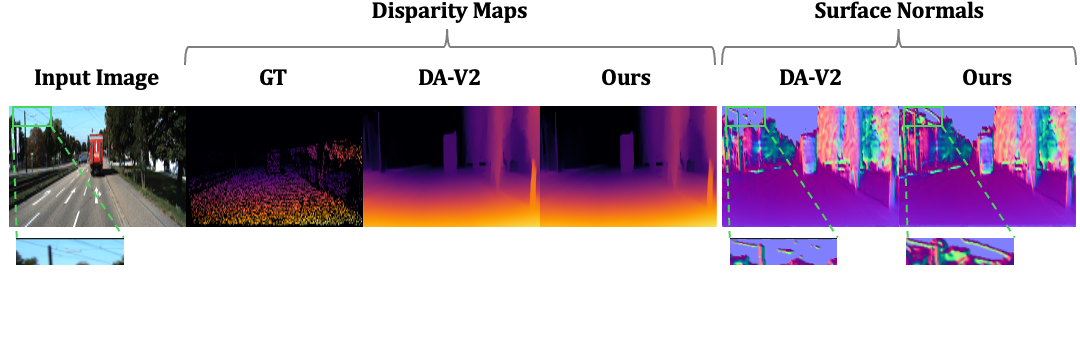

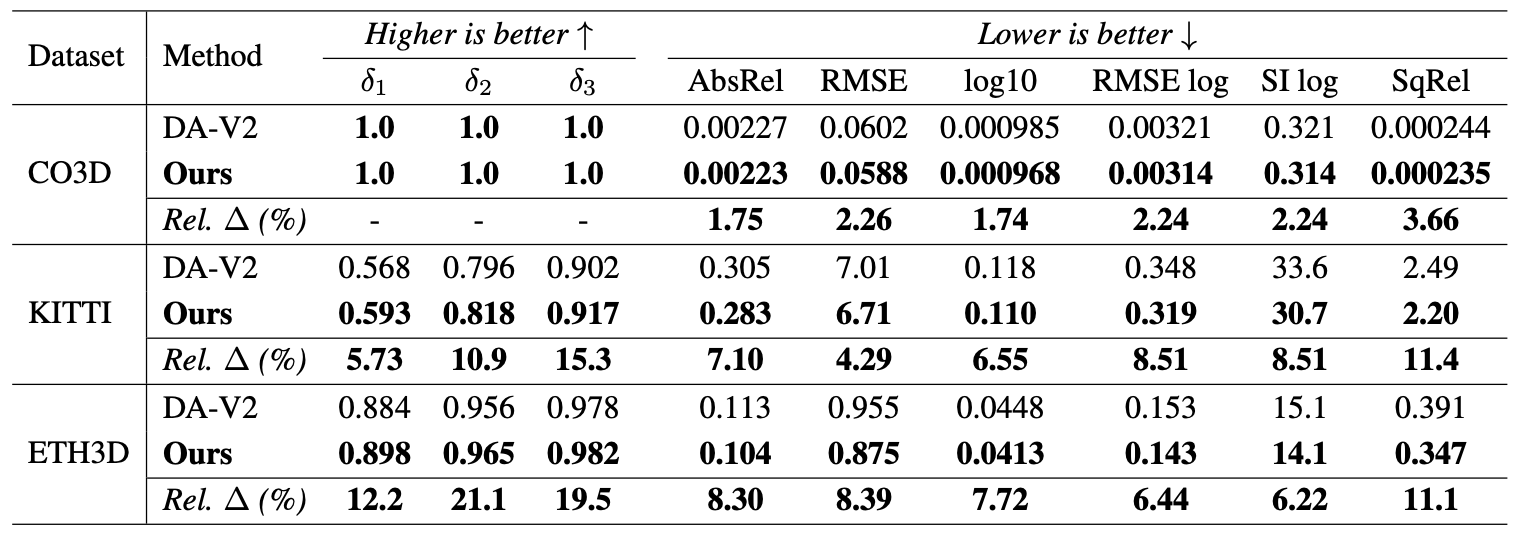

Monocular depth estimation remains challenging, as foundation models such as Depth Anything V2 (DA-V2) struggle with real-world images that are far from the training distribution.

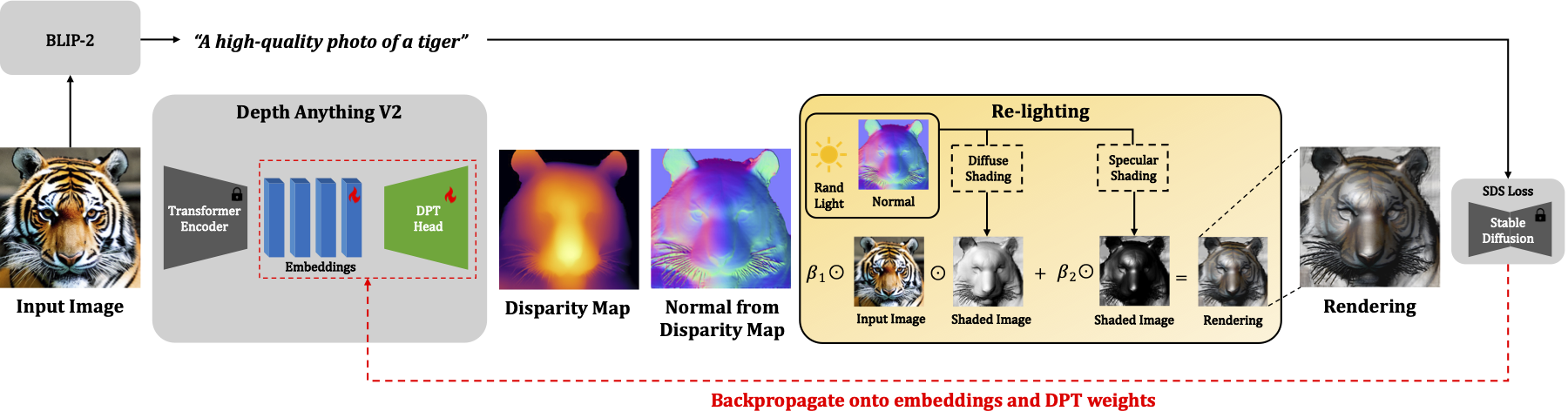

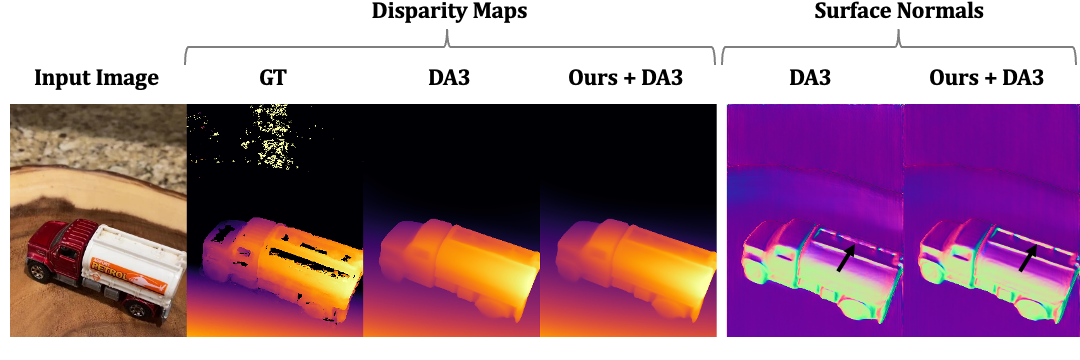

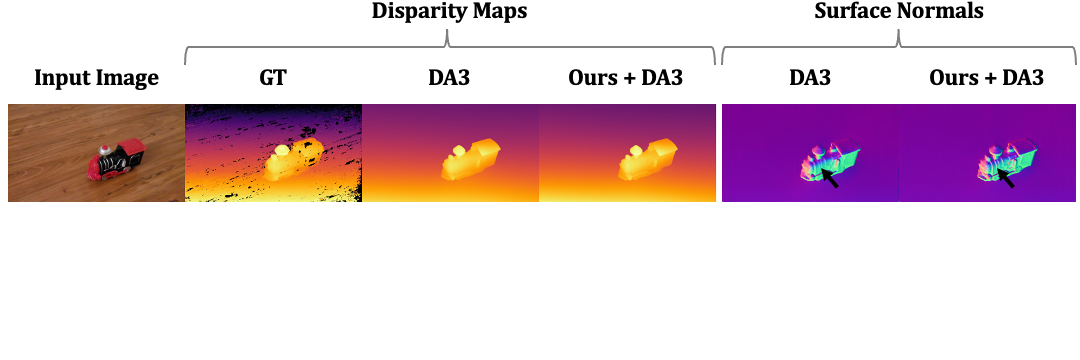

We introduce Re-Depth Anything, a test-time self-supervision framework that bridges this domain gap by fusing foundation models with the powerful priors of large-scale 2D diffusion models. Our method performs label-free refinement directly on the input image by re-lighting the predicted depth map and augmenting the input. This re-synthesis method replaces classical photometric reconstruction by leveraging shape from shading (SfS) cues in a new, generative context with Score Distillation Sampling (SDS). To prevent optimization collapse, our framework updates only intermediate embeddings and the decoder's weights, rather than optimizing the depth tensor directly or fine-tuning the full model.

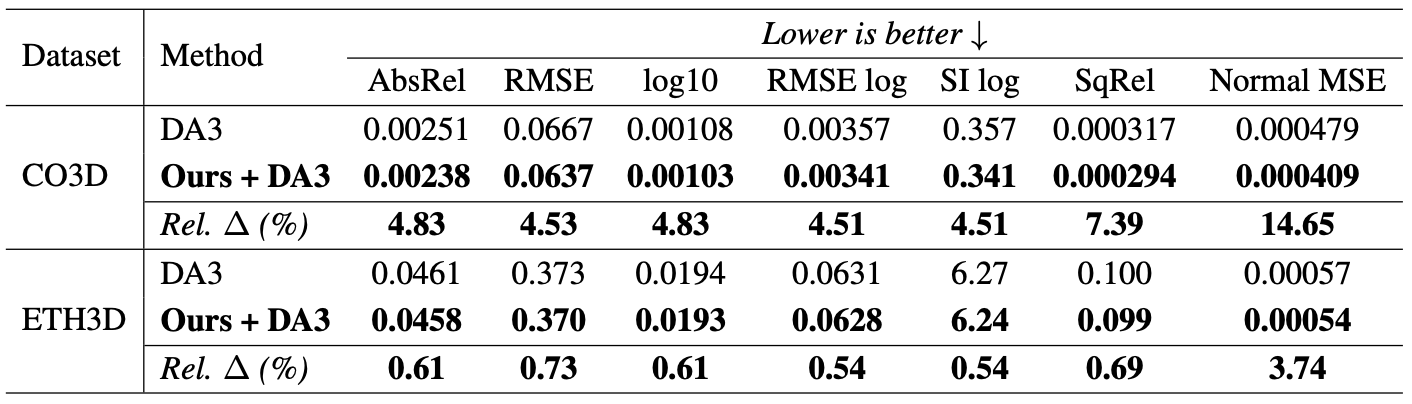

Across diverse benchmarks, Re-Depth Anything yields substantial gains in depth accuracy and realism over DA-V2, and applied on top of Depth Anything 3 (DA3) achieves state-of-the-art results, showcasing new avenues for self-supervision by geometric reasoning.